Every company I’ve spoken with at the 50–100 person mark has a graveyard.

Not a literal one - a digital one. A Profit account nobody logs into. A Perdoo workspace that peaked in January or a Notion OKR template that was updated eight times before everyone quietly gave up.

The team blames themselves. The vendor blames change management.

But nobody blames the product.

That’s the wrong diagnosis - and it negatively impacts growth. The reason most teams abandon OKR software after one cycle isn’t a people problem. It’s a product design problem that could have been spotted on day one, if you knew where to look.

The Failure Doesn’t Happen at the End of the Quarter. It Happens in Week Two.

Most post-mortems tend to focus on the wrong moment.

Teams point to the missed quarter, the stale dashboard, the Q4 retrospective where everyone admitted the tool wasn’t being used. But the abandonment decision is made much earlier - typically within the first two weeks of rollout.

Here’s what happens:

OKR software gets launched with real intent. A Head of Ops or RevOps lead spends a weekend configuring it. Kick-off goes well. Then, on day eight, a team lead tries to update a key result and hits three clicks, a dropdown they don’t understand, and a progress field that doesn’t match how their team actually measures things.

They close the tab. They don’t come back.

That single moment of friction - repeated across twelve team leads - is how a tool dies. Not with a decision, but with a tab that never gets reopened.

The Update Loop Is Where Every OKR Tool Lives or Dies

The core function of any OKR tool is deceptively simple:

It needs to make recording progress easier than avoiding it. That’s it.

Everything else - dashboards, integrations, alignment views - is downstream of whether people actually update the system.

Most tools fail this test badly. We’ve run 100s of external user tests and I’ve personally watched team leads at a 90-person SaaS company spend eight minutes trying to log a single check-in update because the tool required a confidence score, a written comment, a linked initiative, and a status tag - all mandatory fields before saving.

Eight minutes. For one update.

Across a team of 40, that’s a hidden tax of hours per week that nobody budgeted for.

When the update cost exceeds the perceived value of the update, people stop updating. When people stop updating, the data goes stale. The tool is dead - even if the subscription is still active, and you end up paying for something no one uses.

The Four Product Failure Patterns I See Repeatedly

After watching dozens of rollouts succeed and fail, the abandonment reasons compress into four product-level failure patterns. None of them are about OKR methodology. All of them are about design.

- Mandatory field overload: The OKR tool forces completeness before it earns trust. Contributors are asked to fill in six fields per update on day one, before the habit has formed. Compliance drops immediately.

- Separation from actual work: OKRs live in one tool, tasks live in Jira or Linear, and there’s no lightweight bridge between them. Contributors have to context-switch into the OKR tool deliberately—it never shows up where work happens. Deliberate context-switching doesn’t survive a busy quarter.

- Ownership ambiguity baked in: The tool allows team-level assignments without forcing individual owners. “Product team” owns the key result. Three people assume one of the others is tracking it. The problem? Nobody is.

- Reporting-first interface: The homepage is an executive dashboard, not a contributor’s daily view. The tool signals immediately that it was built for the person reviewing work, not the person doing it. Contributors feel like they’re updating a reporting machine, not using a tool that drives impact.

Any one of these is enough to kill adoption. Most failing tools have three or four.

Why “Change Management” Is a Vendor Excuse

When a tool fails, vendors almost always attribute it to insufficient change management - not enough training, not enough executive sponsorship, not enough internal champions. This framing is self-serving and worth pushing back on directly.

Slack didn’t need a change management program. Neither did Notion, Linear, or Figma.

Tools that solve a real problem in an intuitive way get adopted because they reduce friction, not because someone ran an internal adoption campaign. If your OKR tool requires a dedicated rollout strategy just to get people to use it, the tool has a design problem - not your team.

A Head of Strategy at a 110-person fintech put it bluntly when I spoke with her:

“We ran three lunch-and-learns, sent weekly reminder emails, and still had 40% of the team not logging in by week six. At some point you have to ask whether the tool is the problem.”

It was.

The Graveyard Gets Expensive Faster Than You Think

The obvious cost is the wasted subscription ($5-10 per user).

But the compounding costs are harder to see and significantly larger.

- Re-onboarding cost: Every tool switch means re-entering goals, re-training teams, and re-building the habits you failed to build the first time. Each cycle takes two to four weeks of a senior operator’s attention.

- Credibility erosion: Every failed OKR rollout makes the next one harder. Teams remember the last tool that didn’t work. “We tried this before” is the hardest objection to overcome internally - and it gets louder with each abandoned system.

- Quarter-lag: When a tool fails mid-cycle, the quarter it was supposed to support is effectively untracked. You don’t just lose the software, but you lose visibility into three months of execution.

- Leadership trust damage: When the Head of Ops recommends a tool that collapses by Q2, it costs political capital. The next system proposal gets more scrutiny, slower approval, and a shorter leash.

One failed rollout at a 75-person B2B services company cost their VP of Operations an estimated six weeks of recovery time - re-exporting data, rebuilding OKRs in Notion, and running manual check-ins for the rest of the quarter.

The vendor’s annual contract was $18K. The operational cost was significantly higher.

What Separates the Tools That Survive One Quarter From the Ones That Don’t

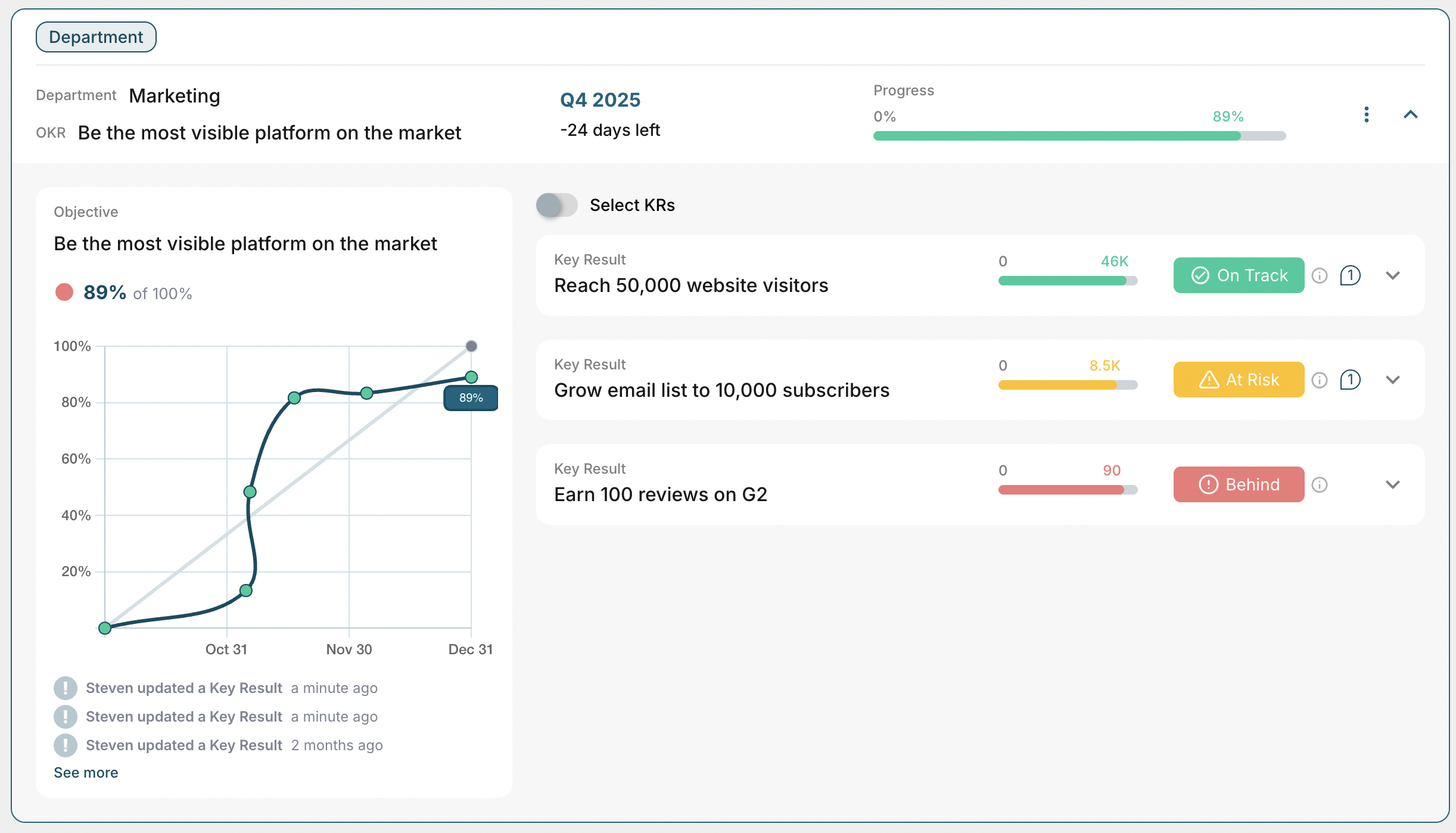

The OKR tools that actually stick at growth-stage companies share a design philosophy that’s worth understanding before you evaluate anything: they treat the individual contributor as the primary user, not the executive reviewer.

That single principle cascades into every meaningful product decision. The homepage surfaces what you own and what needs updating - not a company-wide alignment tree.

Updates take one click, not a form. Ownership is part of the process, not an afterthought. Progress is visible to the whole team by default, creating accountability without surveillance.

The tool should feel like a system that helps contributors stay on track - not one that helps leadership check whether contributors are on track.

That distinction is subtle, but obvious the moment a team lead tries to use it.

Before You Evaluate Another Tool, Run This Audit

If you’ve been through at least one failed OKR software rollout, the worst thing you can do is repeat the same evaluation process.

Most buying decisions happen in demos, and demos are designed to show the product at its best - not to simulate what week three looks like for a team lead who’s behind on a sprint.

Before you sign anything, do this: take your most skeptical team lead - not your most enthusiastic one - and ask them to complete a real update with no guidance. Time it.

Watch where they hesitate. If it takes more than two minutes or requires a single explanation from you, that hesitation is a preview of your Q2.

The graveyard grows one abandoned tab at a time. The way to stop adding to it isn’t better change management - it’s buying a tool that doesn’t need it.

What Makes OKRs Tool Different

OKRs Tool is part of the graveyard, too.

We’ve had hundreds of organizations sign up, try it, and leave. That’s the reality of building in this category. But here’s the part that matters: the teams that come back - and subscribe - stay.

I built OKRs Tool from the ground up for the individual contributor.

Before writing a line of code, I ran OKRs for four years inside operating teams. I know what week three feels like. I know what Friday afternoon updates feel like. And I know the habits that actually determine whether a system survives: the weekly two-minute progress check.

So we designed the product around that habit.

Here’s what that means in practice:

- Weekly reminder nudges that pull contributors back into the system before momentum drops.

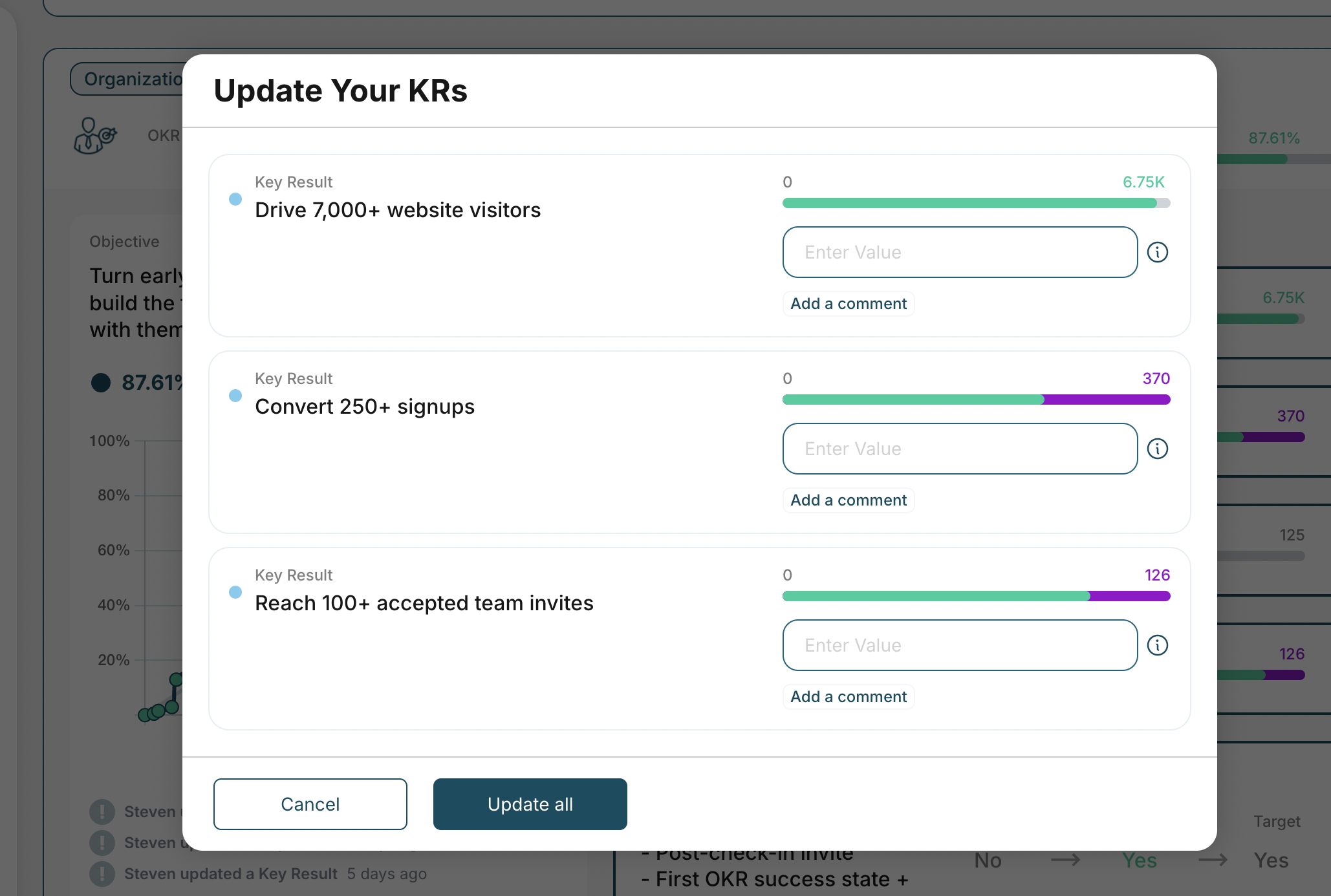

- Bulk KR updates in seconds, because no one should click into six separate screens to log progress.

- Optional comments, attachments, and initiatives - available when useful, invisible when not.

We run external user tests every month. We get direct feedback from customers every week. And we ship improvements based on usability and performance issues - not feature requests from executive dashboards.

OKRs Tool is intentionally simple. In fact, you shouldn’t be spending more than 30 seconds per week in the system. If you are, we’ve failed the design test.

Want to see whether it feels different? Try it yourself. It’s free for up to 5 users.